-

Lara Li

58.com

Senior Design Manager

Lara Li

58.com

Senior Design Manager

She has been engaged in the Internet industry for more than ten years, worked in Tencent, Sina and other companies from design management and expert work, in charge of the platform business in 58.com, across multiple product lines of multi-party collaboration, accumulating rich project experience. She is good at exploring design methods and talking about the implementation of experience optimization from the platform perspective.

Design philosophy: design is a pursuit of perfection in the attitude of life.

The Way to Use Design Thinking Driven Data

Data thinking has become increasingly popular in recent years, specifically for the exploration of replicable operational processes standardised through design outcomes, which are continually precipitated into the design knowledge management system to facilitate team growth, thereby improving the overall efficiency and effectiveness of the business.

The exploration of design outcomes, on the other hand, has been vague in terms of the changes brought about in projects. It is often questioned by the product and lacks rational indicators. This sharing is a case study through the experience of platform-based multi-scenario testing and multiple optimization iterations to make sense and eventually realize the design process and achieve steady improvement of data.

58.com UX Center insists on data-driven design, tracks user paths for deep data analysis, establishes a designer user data centre, develops efficiency tools, creates the best design rules suitable for sinking users, puts in a large amount of material to verify the changes of millions of clicks brought by design, continuously digs into the factors affecting data, formulates the direction of optimization together with the product, implements the implementation together with technology, and systematizes, standardizes and processes design. In this workshop, the speaker will share his thoughts on how to improve the design process.

In this workshop, the presenter will share how to validate design through common user behaviour analysis models, including user funnel analysis, user retention analysis, user demographic analysis, user brand mouth positioning research and other common methods, further determine the optimisation indicators and validation criteria, select the optimal solution for testing, respect the design results, and thus make quick design decisions.

Taking the platform product of 58.com as a force, we create a common component to enhance conversion through small-scale testing of the traffic entrance, and promote the whole business improvement. From feedback gathering information to design integration, from R&D landing to online data observation, and finally form a reusable method.

Workshop content introduction.

1. What is data-based thinking

1.1 Data-based experience problems, deductive, inductive and analogical methods

1.2 Data-driven design objectives, *SMART principles, task allocation and modular management

1.3 Data for design results, project establishment of design acceptance criteria, design quality assessment, human performance criteria

1.4 Improve multi-dimensional thinking, planning level, execution level, performance level, six thinking caps thinking method

2. Introduction to common analytical models

2.1 Common data background scale, *UV, *PV, *CTR and other observation methods

2.2 Retention analysis: based on the platform's one-year user retention changes, explore ways to improve user stickiness through design, and design product paths to extend the user life cycle

2.3 User funnel analysis: observe user paths and explore the churn points that affect conversion. The funnel can show the conversion rate of each stage, through the comparison of the relevant data of each part of the funnel, we can find out the user churn mitigation, so as to find the direction of optimization

2.4 User stratification analysis: analyse user attributes and behavioural characteristics, and analyse the design style, design philosophy and design principles through multiple rounds of testing of design solutions based on the same location for different user layers at different times. Define the best design order

2.5 Result-oriented analysis: any data will have certain pitfalls, how to cope with the changing market changes, objective + subjective judgement for design decisions

2.6 Competitive analysis, how to analyse strengths and weaknesses in similar products and strengthen competitiveness

2.7 A/B test, time, loop, tree, queue analysis thinking and other methods introduction

2.8 Basics of data backend, building data metrics, designing optimization burial points

3. Common approaches to experience-driven, full implementation of design landing

3.1 The advantages and disadvantages of the two-track drive model, lean iteration and cycle optimization. If to achieve rapid implementation, from research - analysis - solution - testing - verification - specification - promotion Comprehensive sharing of design experience

3.2 Requirements hierarchy and release scheduling opportunity points, driving optimisation efficiency and design evaluation

3.3 Goal management and quantification, cooperation and communication with technical department and design burial methods, adding version requirements and tracking mechanism

3.4 How to collect offline and online user data through other means

4. How to build and design sentiment indicators

4.1 How to set up an emotive research questionnaire

4.2 How to drive satisfaction through design style changes and atmosphere creation

4.3 Testing and exploring solutions for layout, copy and style to increase business attraction by 50%

4.4 The impact of colour, light and shadow, materials and volume on human mental models

*Notes.

- The SMART principles state that work objectives and performance management need to be consistent with the following five principles: objectives should be, S (Specific) specific; M (Measurable) measurable; A (Attainable) attainable and achievable; R (Realistic) relevant; T (Time-bound) clearly time-bound.

- UV is (Unique Visitor), the number of users who visit a site in 1 day (based on cookies), a computer client who visits a site is a visitor. It can be interpreted as the number of computers that visit a website.

- PV (Page View) visits, that is, page views or clicks, measuring the number of pages visited by users of a website; in a certain statistical period the user opens or refreshes a page to record a time, multiple openings or refreshes of the same page then the total number of views.

- CTR (Click-Through-Rate) is the click-through rate, which is the ratio of the number of times a user clicks and enters a website to the total number of times called the click-through rate.

1、The presenter shares methods and examples, interactive Q&A, sets up business groups, selects project types, simulates user actions and records user behaviour

2、Draw and claim method, simulation training, brainstorming and optimisation analysis to generate quantifiable solutions. And demonstration of implementation steps and target expectations

3、Recruiting users from neighbouring tables to evaluate the programme experience. Review and feedback to validate the strengths and weaknesses of the approach

4、Expert critique and group voting for excellence, Q & A session

5、Workshop Summary

1、UI/Visual Designer (3-10 years Intermediate to Senior)

2、Interaction Designer (1-7 years)

3、Product Designer (1-7 years)

1、Help attendees understand data analysis models, including retention, funnel, behavioural path analysis and other methods for design validation

2、Help attendees learn to use data-based thinking, set goals, execute, implement, and improve business conversion through standardized processes and data collection

3、Design work with a product perspective and holistic thinking to enhance design drivers

-

Design factors affecting data

Design factors affecting data

-

Channel repurchase process conversion differences

Channel repurchase process conversion differences

-

Transformation funnel case

Transformation funnel case

-

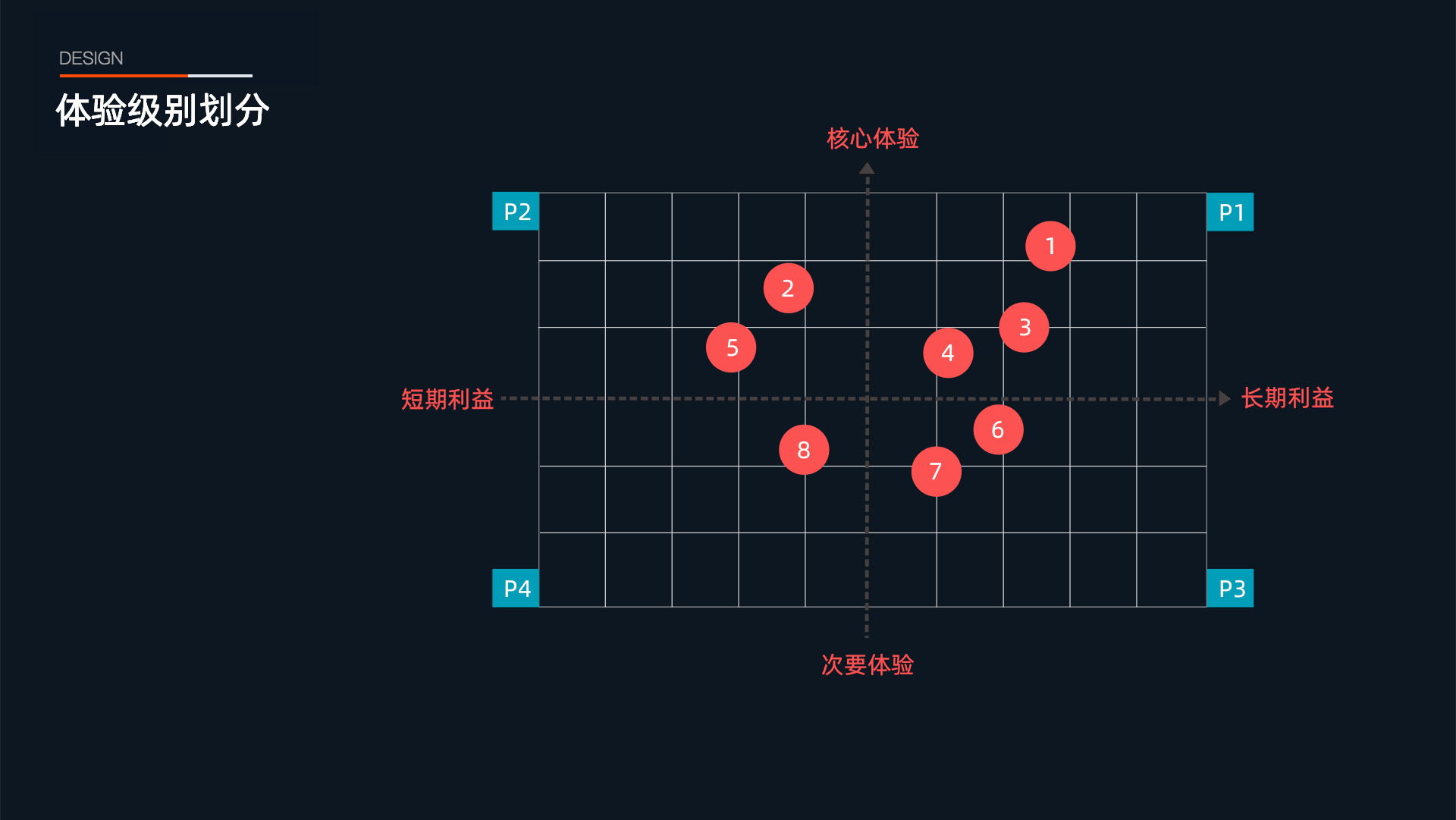

Demand grading

Demand grading

-

Two-track optimisation model

Two-track optimisation model

-

Manpower assessment methods

Manpower assessment methods

-

User conversion funnel analysis

User conversion funnel analysis

-

Platform home page revamp

Platform home page revamp